Category: Data Science

Things to consider when driving machine intelligence within a large organization

While modern day A.I. or machine intelligence (MI) hype revolves around big ”eureka” moments and broad scale “disruption”, expecting these events to occur regularly is unrealistic. The reality is that working with AI’s on simple routine tasks will drive better decision making systemically as people become comfortable with the questions and strategies that are now possible. And hopefully, it will also build the foundation for more of those eureka(!) moments. Regardless, technological instruments allow workers to process information at a faster rate while increasing the precision of understanding. Enabling more complex and accurate strategies. In short, it’s s now possible to do in hours what it once took weeks to do. Below are a few things I’ve found helpful to think about when driving machine intelligence at a large organization, as well as what is possible.

- Algorithm Aversion — humans are more willing to accept the flawed human judgment. However, people are very judgmental if a machine makes a mistake – even within the lowest margin or error. Decisions generated by simple algorithms are often more accurate than those made by experts, even when the experts have access to more information than the formulas use. For further elaboration on making better predictions, the book Superforecasting is a must read.

- Silos! The value of keeping your data/information a secret as a competitive edge does not outrun the value of potential innovation or insights if data is liberated within the broader organization. If this is possible build what I call diplomatic back channels where teams or analysts can sure data with each other.

- Build a culture of capacity. Managers are willing to spend 42 percent more on the outside competitor’s ideas. Leigh Thompson, a professor of management and organizations at the Kellogg School says. “We bring in outside people to tell us something that we already know,” because it paradoxically means all the wannabe “winners” in the team can avoid losing face”. It’s not a bad thing to seek external help, but if this is how most of your novel work is getting done and where you go to get your ideas you have systemic problems. As a residual, the organization will fail to build strategic and technological muscle. Which is likely to create a culture which emphasizes generalists, not novel technical thinkers in leadership roles. In turn, you end up with an environment where technology is appropriated at legacy processes & thinking – not the other way around (if you want to stay relevant). Avoid the temptation to outsource everything because nothing seems to be going anywhere right away. That 100-page power point deck from your consultant is only going to help in the most superficial of ways if you don’t have the infrastructure to drive the suggested outputs.

OSINT One. Experts Zero.

Our traditional institutions, leaders, and experts have shown to be incapable of understanding and accounting for the multidimensionality and connectivity of systems and events. The rise of the far-right parties in Europe. The disillusionment of European Parliament elections as evidenced by voter turnout in 2009 and 2014 (despite spending more money than ever), Brexit, and now the election of Donald Trump as president of the United States of America. In short, there is little reason to trust experts without multiple data streams to contextualize and back up their hypotheses.

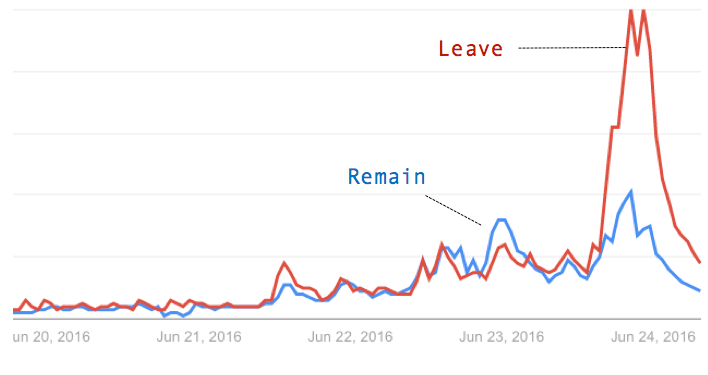

How could experts get it wrong? Frankly, it’s time to shift out of the conventional ways to make sense of events in the political, market, and business domains. The first variable is reimagining information from a cognitive linguistic standpoint. Probably the most neglected area in business and politics – at least within the mainstream. The basic idea? Words have meaning. Meaning generates beliefs. Beliefs create outcomes, which in turn can be quantified. The explosion of mass media, followed by identity-driven media, social media, and alternative media, is a problem, and we are at the mercy of media systems that frame our reality. If you doubt this, reference the charts below. Google Trends is deadly accurate in illustrating what is on people’s minds the most, bad or good, wins – at least when it comes to U.S. presidential elections. The saying goes bad press is good press is quantified here, as is George Lakoff’s thinking on framing and repetition (Google search trends can be used to easily see which frame is winning, BTW ).

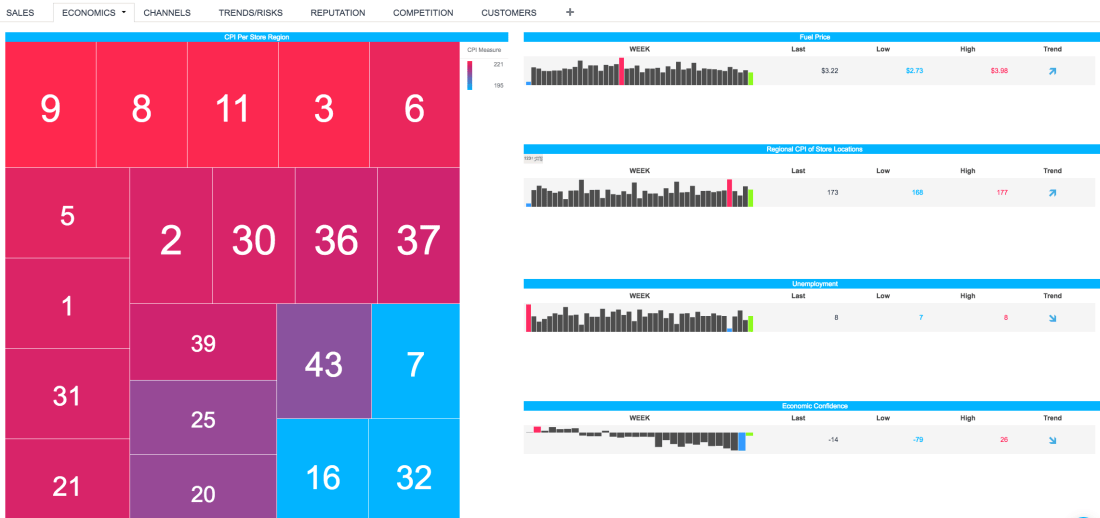

Within this system, there is little reason to challenge one’s beliefs, and almost nothing forces anyone to question their own. Institutions and old media systems used to be able to bottleneck this; they were the only ones with a soapbox, and information was reasonably slow enough. There is a need for unorthodox data, such as open-source intelligence (OSINT) and creativity, which traditional systems of measurement and strategy lack to outthink current information systems. To a fault, businesses, markets, and people strive for simple, linear, and binary solutions or answers. Unfortunately, complex systems, i.e., the world we live in, don’t dashboard into nice simple charts like the one below. The root causes of issues are ignored, untested, and contextualized, which creates only a superficial understanding of what affects business initiatives.

I know this may feel like a reach in terms of how all that is mentioned is connected, so more on OSINT, data, framing, information, outcomes, and markets to come.

Cheers, Chandler

The Brexit

While investors were in shock, open source signals such as Google trends often pointed to “Leave” predominating, illustrating expert and market biases. Perhaps they should work on integrating these unconventional data streams better (sorry couldn’t help it). The UK’s decision to exit from the EU is part of a larger global phenomenon that could have been understood better with open source, not just market, data.

The world is growing more complex. Information is moving faster. Humans have not evolved to retain or understand this mass output of (dis) information in any logical way. As a response, a retreat to simple explanations and self-censorship towards new ideas that might challenge one’s frame are ignored and become the norm. Populist decisions are made and embraced, oftentimes reactionary towards the establishment or elite. Multi-national corporations and elites will need to step outside of their bubble and take note of nationalist, albeit sometimes isolationist, such as Donald Trump, Bernie Sanders, President Erdogan of Turkey, Marie Le Pen’s Front National of France, Boris Johnson – Former Mayor of London and Brexit backer (good chance he takes David Cameron’s place), Germany’s AFD and the 5-star movement in Italy gain in both popularity and power.

In addition to the more media coverage, more people were associated with the leave campaign, which is an advantage. During a political campaign, choices and policy lines are anything but logical; they tend to fall on emotional lines, so institutional communications must have a noticeable figurehead, especially in the media age. It says something when the top people who are associated with Remain are Barak Obama, Janet Yellen, and Christine Lagarde. Note that David Cameron is more central with leave. Nonetheless, the pleas by political outsiders and institutions such as the IMF and World Bank for the UK to remain in the EU potentially caused damage to the “Remain” campaign. UK voters did not want to hear from foreign political elites. This is illustrated by the connection and proximity of the “Obama Red Cluster” to the French right-wing Forest Green cluster (and the results) below. The “Brexit” could lend credence to the possibility of EU exit contagion. There are very real forces in France (led by the Front National) and Italy (led by the 5 Star Movement (who just won big in elections) that are driving hard for succession from the EU and or, potentially, the Eurozone.

Ramifications:

- Banks (especially European ones) are fleeing to the gold market, seeking shelter from volatility. While this is to be expected, dividend-based stocks and oil would also be attractive to those seeking stability.

- Thursday’s referendum sent global markets into turmoil. The pound plunged by a record, and the euro slid by the most since it was introduced in 1999. Historically, the British Pound reached an all-time high of 2.86 in December of 1957 and a record low of 1.05 in February of 1985.

- Don’t count on US interest rate hikes. Yellen has expressed concern about global volatility on multiple occasions. The Brexit just added to that. The Bank of England could follow the US Fed and drop interest rates on the GBP to account for market uncertainty.

- If aggressive, European uncertainty could allow US companies to gain on European competitors. Due to the somber mood within Europe, companies could be more conservative with investment, leaving them vulnerable.

- Alternatively, Brexit may trigger more aggressive U.S. or global expansion by European Companies while the ramifications of Brexit are further understood.

- The political takeaway is that the Remain campaign was relatively sterile, with no figurehead or clear policy issues directly related to the EU. This was reflected by the diversity in associated search terms related to the “Leave” campaign. In addition, Angela Merkel, not an EU leader such as European Commission President Jean-Claude Junker, was once again seen as the de facto voice of Europe.

Next Generation Metrics

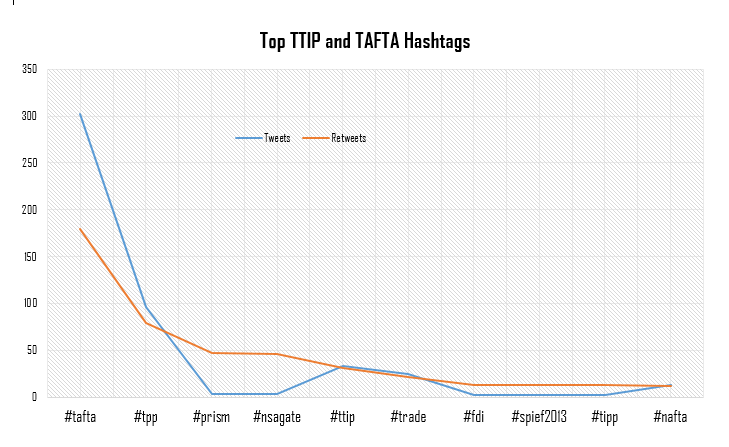

Recently I’ve been thinking of ways to detect bias as well as look into what makes people share. Yes understanding dynamics and trends over time, like the chart below (Topsy is a great simple, free easy tool to get basic Twitter trends), can be helpful – especially with linear forecasting. None the less they reach their limits when we want to look for deeper meaning – say at the cognitive or “information flow” level.

Enter networks. This enables an understanding of how things connect to and exist at a level that is not possible to do with standard KPIs like volume, publish count and sentiment over time. Through mapping out the network based on entities, extracted locations, and similar text and language characteristics it’s possible to map coordinates of how a headline, entities or article exists and connects to other entities within the specific domain. In turn, this creates an analog of the physical world with stunning accuracy – since more information is reported online every day. For example, using to online news articles and Bit.ly link data, I found articles with less centrality (based on the linguistic similarity of the aggregated on-topic news article) to their domain, which denote variables being left out (of the article), typically got shared the most on social channels. In short, articles that were narrower in focus, and therefore less representative of the broader domain, tended to be shared… This is just the tip of the iceberg.

The German election and EU political communications

For this post, I decided to remain old school and mainly rely on search data. It’s pretty basic, but typically offers a great view of what people are interested in. Google’s market share is around 90% in Europe and it’s the most visited site in the world. In my opinion, Google Trends is the largest focus group in the world.

First I looked at the overall interest in Germany between Angela Merkel and Peer Steinbrück, as well as their political affiliations – the CDU and PSD. Initially, I was curious as to how party identity interest compared to interest in the politician. To anchor this chart I did the same with US presidential campaign (the chart below). I have a hunch, and the data seems to be telling me thus far, that the more media-oriented politics becomes (along with everything else in the world), the more important celebrity, authenticity, and individuality becomes. Take a look at this recent brand analysis done by Forbes. Chris Christie wins, having the highest approval rating of over 3,500 “brands” according to BAV (awesome company) at 78%. For those that don’t know, Christie is probably the most straight forward tell-it-like-it-is politician in the country.

So what can we learn from the Google search interest shown below?

- Politics is still about sheer volume and name recognition. For those that think being novel and unique achieves victory over blasting away nonstop in a strategically framed and coordinated way, think again. People tune out if they aren’t interested. Irrelevance is almost always worse than bad PR or sentiment (excluding a case like Anthony Weiner). You simply don’t win if you don’t interest people. If people aren’t talking about you, you’re not interesting. Merkel had more search interest than Steinbrück and over the course of the year probably got 10,000 times more airtime, both good and bad, due to her large role in the euro crisis. In short, repetition is king.

- Framing and consistent language strategy is vital. Volume can be shown to equate with recognition of a person, but this can easily enough be analogized to a policy or issue. Give me a choice between a clever social media strategy or consistent language strategy, meaning all the key issues are repeated by the party and coordinated as much as possible, and I’ll take the language strategy any day. It’s amazing how just being consistent in political communications is overlooked by companies and political leaders in Europe. Social media tends to be a framing conduit, not the reason people mobilize or have opinions.

- The world is growing ever more connected. Look at how global the reporting of the German election was. Obviously, its importance was higher due to Germany’s rising influence, but none the less the amount of sources from all over the world is impressive. A note for the upcoming EU elections: don’t forget to target the USA and other regions to influence specific regions in Europe. A German constituent might read about a policy from the Financial Times, a Frenchman the Wall Street Journal or an American based in Brussels, who knows Europeans who can vote, Bloomberg.

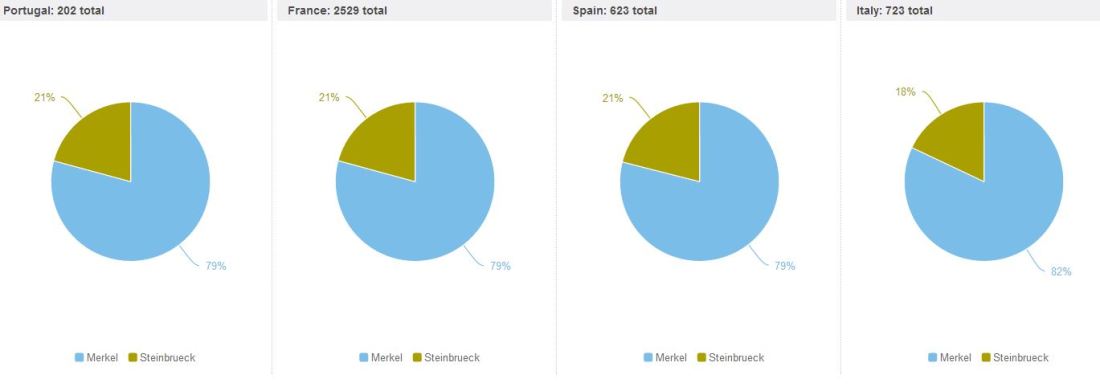

I decided to throw in Twitter market share of the candidates from August 21st to September 21st, the day prior to elections. I found it interesting to see how closely Belgium and the United State reflect Germany, probably because these countries are looking at the elections from more of a spectator view. Meanwhile, southern Europe, which had a vested interest in the election, was pretty much aligned. France, Spain, and Italy seem to report a bit more, and in a similar way, on Merkel – probably due to sharing the same media sources. Unfortunately, I don’t have the time to look into this pattern too much at the moment, but it’s something I’ll continue to think about in the future.

I decided to throw in Twitter market share of the candidates from August 21st to September 21st, the day prior to elections. I found it interesting to see how closely Belgium and the United State reflect Germany, probably because these countries are looking at the elections from more of a spectator view. Meanwhile, southern Europe, which had a vested interest in the election, was pretty much aligned. France, Spain, and Italy seem to report a bit more, and in a similar way, on Merkel – probably due to sharing the same media sources. Unfortunately, I don’t have the time to look into this pattern too much at the moment, but it’s something I’ll continue to think about in the future.

Breakdown of TTIP and TAFTA

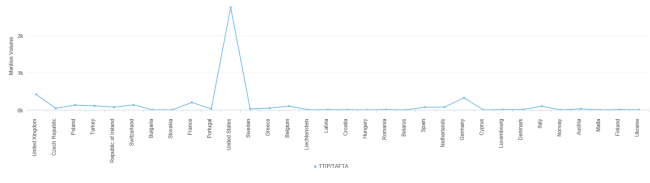

TTIP/TAFTA is a true game changer for both the EU and US in terms of economic value, especially in a time of crisis for the EU. To find out exactly what content people consumed and analyze policy trends, we mined the web (big data). At the moment TTIP/TAFTA is not being met without issues – as we all know in Brussels – #NSAGate, data privacy and IP are slowing down negotiations (we’re looking at you France), and this is generally what the data had to say as well.

Over view: 5,505 mentions of TTIP/TAFTA in the last 100 days – too large of number for business and institutions to ignore. In short you need to join the conversation if you have something to say about it ASAP (indecision is a decision).

The biggest uptick – a total of 300 mentions – came when Obama spoke at the G-8 summit in Ireland on June 17th when trade talks began. The key theme at this time was the potential boost in the economy. The official press release is here.

“The London-based Centre for Economic Policy Research estimates a pact – to be known as the Transatlantic Trade and Investment Partnership – could boost the EU economy by 119 billion euros (101.2 billion pounds) a year, and the U.S. economy by 95 billion euros.However, a report commissioned by Germany’s non-profit Bertelsmann Foundation and published on Monday, said the United States may benefit more than Europe. A deal could increase GDP per capita in the United States by 13 percent over the long term but by only 5 percent on average for the European Union, the study found.”

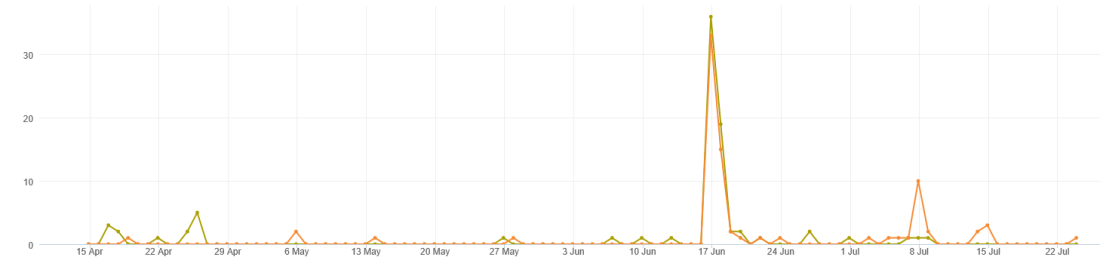

Given that there is conflicting information we wanted to see whose idea and data wins out – the Centre for Economic Policy Research (CEPR) or Bertelsmann Foundation (BF)? To do this we looked to see which study was referenced most. The chart below shows the mentions of each organization within the TTIP/TAFTA conversation over the last 100 days. The Center for Economic Policy Research is in orange and the Bertelsmann Foundation is in green.

In total both studies were cited almost the same amount:

- Centre for Economic Policy Research: 80 Mentions

- Bertelsmann Foundation: 83 mentions

- Both organizations were mentioned together 53 Times.

More recently though the trend seems to show that the Economic Policy Research is being cited most in the last 30 days, including a large uptick on July 8th. This is mainly due to the market share of the sources being located more in the US and the US wanting to get a deal done faster than the more hesitant Europeans. Keep in mind the CEP claims larger benefits of TAFTA/TTIP than the BF study.

Where are the mentions?

- The US had 2,743 mentions (49% overall)

- All of Europe combined total was 1,986 (36% overall)

Of the topics ACTA is still being talked about with, IP and Data Protection top the list. This is not surprising given France’s reluctance to be agreeable because of the former and #prism, so below are those themes plotted.

The top stories on Twitter are in the table below. It’s not surprising that the White House is number one, but where are the EU institutions and media on this?

| Top Stories | Tweets | Retweets | All Tweets | Impressions |

| White House | 37 | 15 | 52 | 197738 |

| Huff Post | 26 | 0 | 26 | 54419 |

| Forbes | 23 | 0 | 23 | 1950786 |

| JD Supra | 15 | 2 | 17 | 42304 |

| Wilson Center | 12 | 7 | 19 | 72424 |

| 12 | 0 | 12 | 910 | |

| Italia Futura | 8 | 0 | 8 | 16640 |

| BFNA | 7 | 0 | 7 | 40246 |

| Citizen.org | 7 | 13 | 20 | 69356 |

| Slate | 7 | 0 | 7 | 13927 |

Everybody knows the battle for hearts and minds of people starts with a good acronym so I broke down the market share between TTIP (165) and TAFTA (2197):

I may add more in the coming days but those are a few simple bits of info for now. Nonetheless if you want to join the conversation on Twitter the top hashtags are below.

Barroso and Kerry Analysis

I decided to break down the events of John Kerry visit to Jose Barroso. The reactions are not drastic as I would have thought considering GMO’s and IP are two issues that people care about on both continents, and the Free Trade Agreement is making some actual headway. We will have more on this in the future, but here are small bits of data for starters

We see that the reporting on the John Kerry and Baroso event is reported on in Brussels 33 times – much more than any other area except for the entire US. Below we see events with organizations and people that both of them together are tied to.

Cross referencing what each leader said within the context above is where it gets interesting. Questions to ask: Who were their targets? Did they attain any impact?

When I first arrived to Brussels I was always amazed at how disconnected D.C was from Brussels with that I decided to look at who was following who. We see that only 1.1% follow both. In short they are disconnected networks. For Comparison sake I also did Obama V Barroso but since Obama has over 30 million follower, the tools I was using at the moment couldn’t process such large amounts of data.

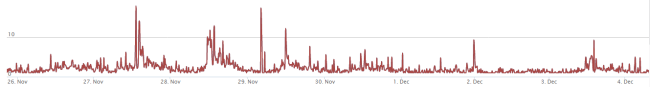

A Quick look at 20,000 Tweets about the EU

From October 25 -> December 4th there were 20,022 Tweets Containing the words European Union, European Parliament and European Commission. This means tweets were quite specific and could not be mistaken for anything else, further of course the majority of posts were in English, although more than 30 languages were represented. Many of the quick findings reinforce numerous Social Network Analysis studies which show that most network opinions and frames are controlled by a minority of people – between 10-20% i.e. elite level. Social media, despite a lot of hype, has not changed this.

The context surrounding these 20K Tweets

- Were produced by 13,832 accounts

- Retweets made up 28% of all Tweets

- The Top 18 Tweets – in terms of most retweeted, made up 9% of all tweets

- Top 18 Tweets that made up 9% of all tweets were made by 11 accounts

- The most visible and retweeted Tweet was a coalition with FC Barcelona (below) – ( This leaves me to question why are there not more collaborations between the public and private sector in the EU?)

Top Accounts from Top 18 Tweets:

- Wikileaks – 6 Tweets in Top 18 (33%)

- Economist – 2 tweets in top 18 (11%)

Top Users commenting on the Euro. UKIP is seems to be taking a proactive approach to framing it’s primary fodder against the EU (the Euro Crisis).

- @UKIP 32

- @YanniKouts 23

- @lindayueh 18

- @AssangeC 9

- @LSEpublicevents 8

Your next firm should be an IT firm.

I’ve interviewed with them all. The big firms, the small firms. Both in Brussels and in New York.

After working in the European Commission and Parliament, I wanted to go to the private side of Communications and Policy. The problem? I had been running data and insights. Using terms like NLP, Sentiment analysis, cognitive science and connotation mapping to describe what I did not work well. On the other hand dumbing down would leave me looking like another 27 year old who does “the social media” and or “the internets”. Most firms doing the interview for analytics positions wanted to hear “pivot table”, “engagement”, “influence” and maybe SPSS. Upping the hierarchy on those terms to explain why “something was” appeared unnecessary and impractical since explaining this to a clients communications director is another task with in itself.

So to hell with the PA/PR firms, I joined an IT firm that also does communications – Intrasoft International, and could not be happier. The people’s skill sets are well defined and paid, which gives them a certain confidence in contrast. They like things such as analytics and completely understand them. Their only fault is the soft side of Communications – which is now my job to merge.

IT is going to take over communications sooner than later. Current PR firms will be left scrambling. The lack of investment in the deeper meaning (abstract knowledge such as transference, retention and pragmatics) will start to show as data/connotation mining becomes a standard practice. Most IT firms already have this infrastructure in place via their AI departments.

There will be a point where dropping shit jargon is irrelevant and companies Comm Director, who should be more of a CIO/CTO in the nearer future, will see right though it.

My 2 cents. Also check out my presentation at chandlerthomas.com

CT